PROJECT SNAPSHOT

- Domain. Pearl is Zillow’s internal AI‑powered “connection engine” that links a home‑shopper (“Beth”) to an available agent (“Alan”) in < 90 seconds.

- Mandate. Redesign the experience around speed, clarity, and trust—while meeting WCAG AA and seeding a reusable design‑token system.

- Core Deliverables. NLP‑driven UI, service blueprint, end‑to‑end journey map, scalable design library.

- Squad. 1 PM, 2 tech leads, 4 engineers, 2 researchers, 1 PM O, and me as Design Lead.

CHALLENGE

- Vanishing Leads: Fewer than 2 % of shoppers who clicked “Contact Agent” ever confirmed a relationship.

- Data Blind‑Spots: In 94 % of hand‑offs, the system could not verify whether agent and shopper had actually spoken.

- Slow, Error‑Prone UI: Agents needed seven clicks and about two minutes to log a single call.

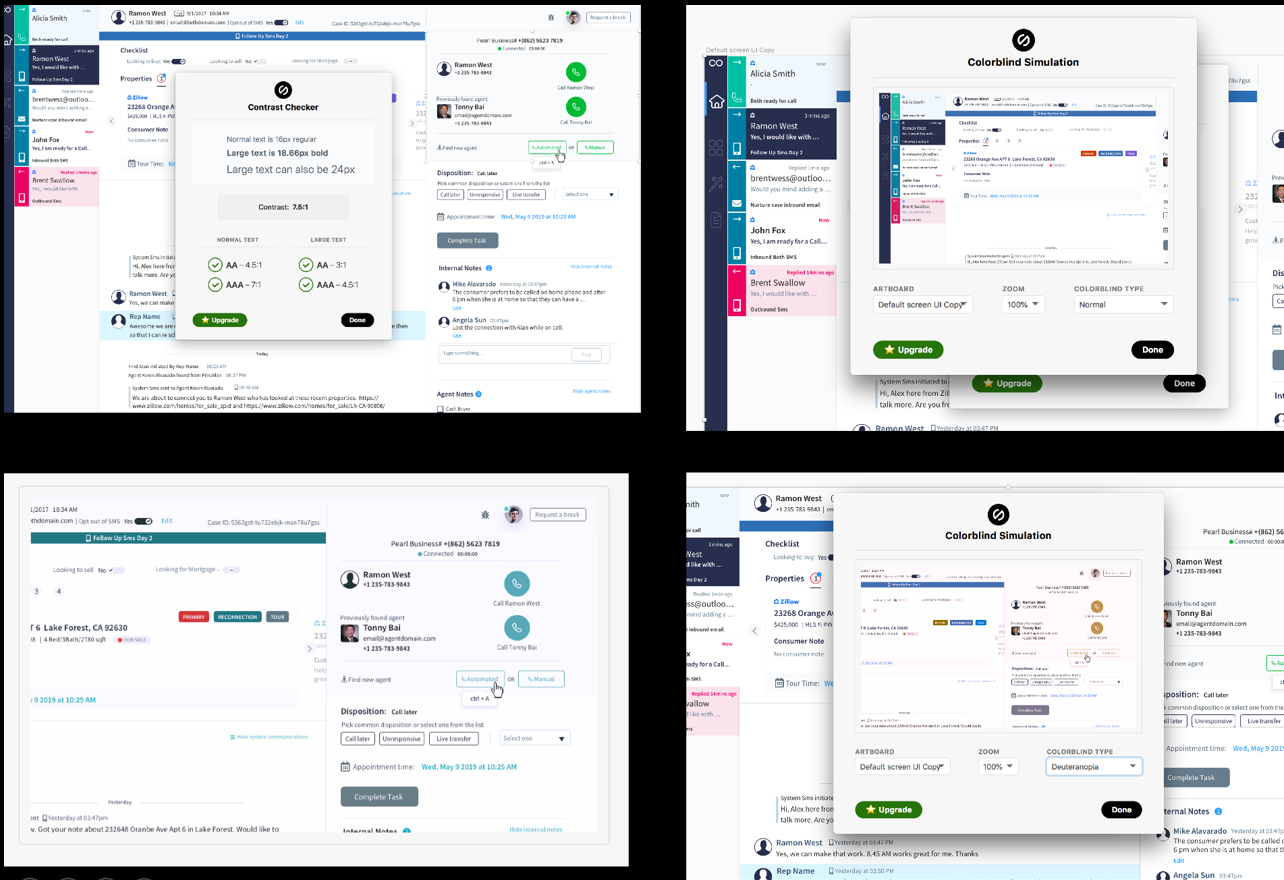

- Accessibility Debt: A dark‑mode palette failed WCAG AA on nearly one‑third of critical screens.

SUMMARY

Pearl began as a proof‑of‑concept meant to connect house‑hunters (“Beths”) and real‑estate agents (“Alans”) in under 90 seconds. What Zillow actually had was a fragile prototype that confused agents, overwhelmed the support team, and delivered little proof of value. I was asked to turn that prototype into a flagship AI workflow—one worthy of Zillow’s vision of “turning on the lights” for every housing decision. Over twelve intense months I led the end‑to‑end design effort: reframing the problem, directing multi‑site research, co‑crafting an AI‑first service blueprint, and seeding the design‑ops foundations that now power three additional internal tools. Conversion, accuracy, and satisfaction surged, but just as important, design became a board‑level lever for trust, ethics, and velocity.

LEADERSHIP APPROACH

- Story‑Driven Alignment:Wrote a future‑state “press release” that painted launch‑day success in plain language. Shared with executives, engineers, operations, and support so everyone aimed at the same outcome.

- Evidence Culture:Embedded a researcher on the call floor for two weeks; every sprint demo began with a short agent audio clip.

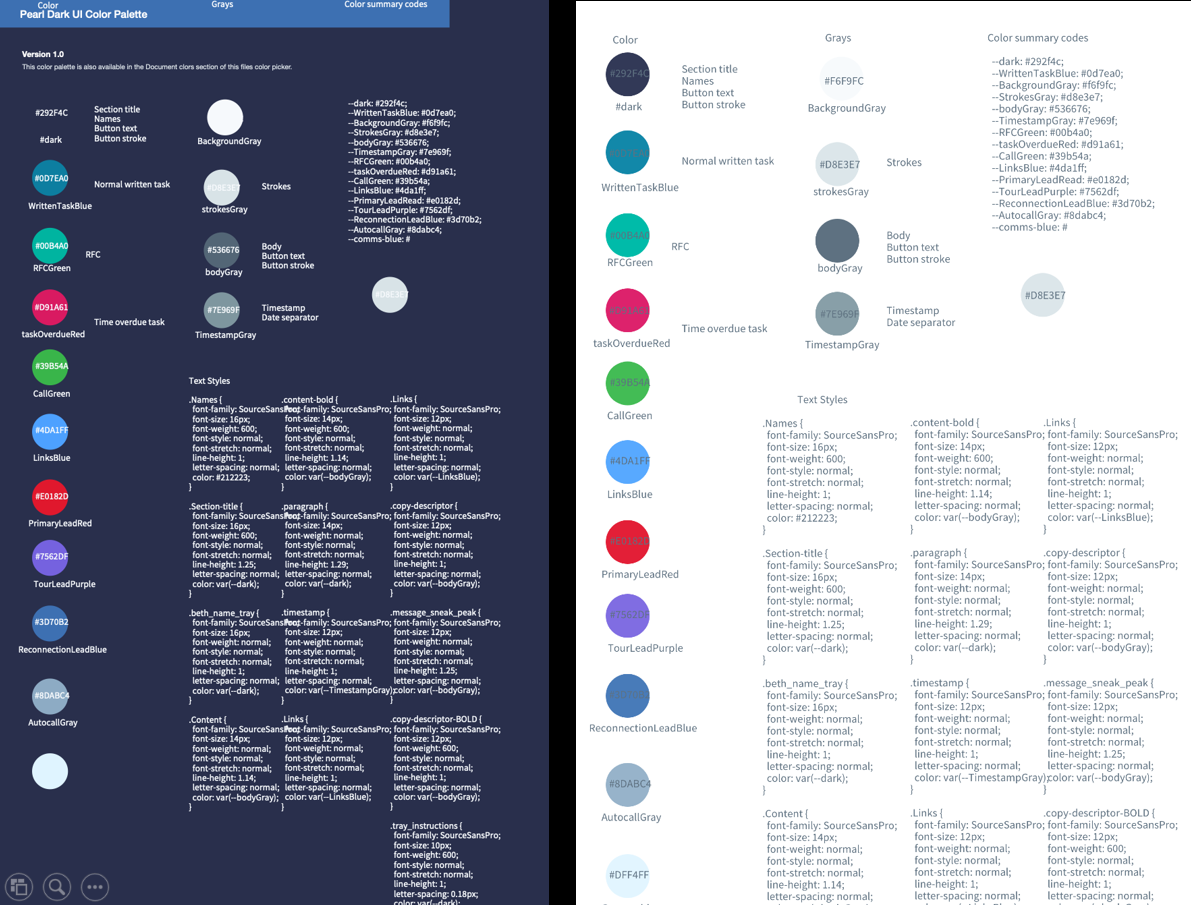

- Design‑Ops Foundations:Introduced token‑based colour, spacing, and motion variables so dark, light, and high‑contrast themes could ship together.

- Ethics Guardrails:Created a four‑point AI decision checklist: data provenance, bias audit, fallback modes, and human‑override options.

- Outcome‑Focused Metrics:Negotiated three experience KPIs that became part of company OKRs:

- Live‑connect time ≤ 90 seconds at the 75th percentile.

- Disposition accuracy ≥ 96 percent.

- Customer Effort Score ≥ 6 out of 7.

PHASE-TO-PHASE JOURNEY

1. EXPLORE PHASE: LIFTING THE RAG

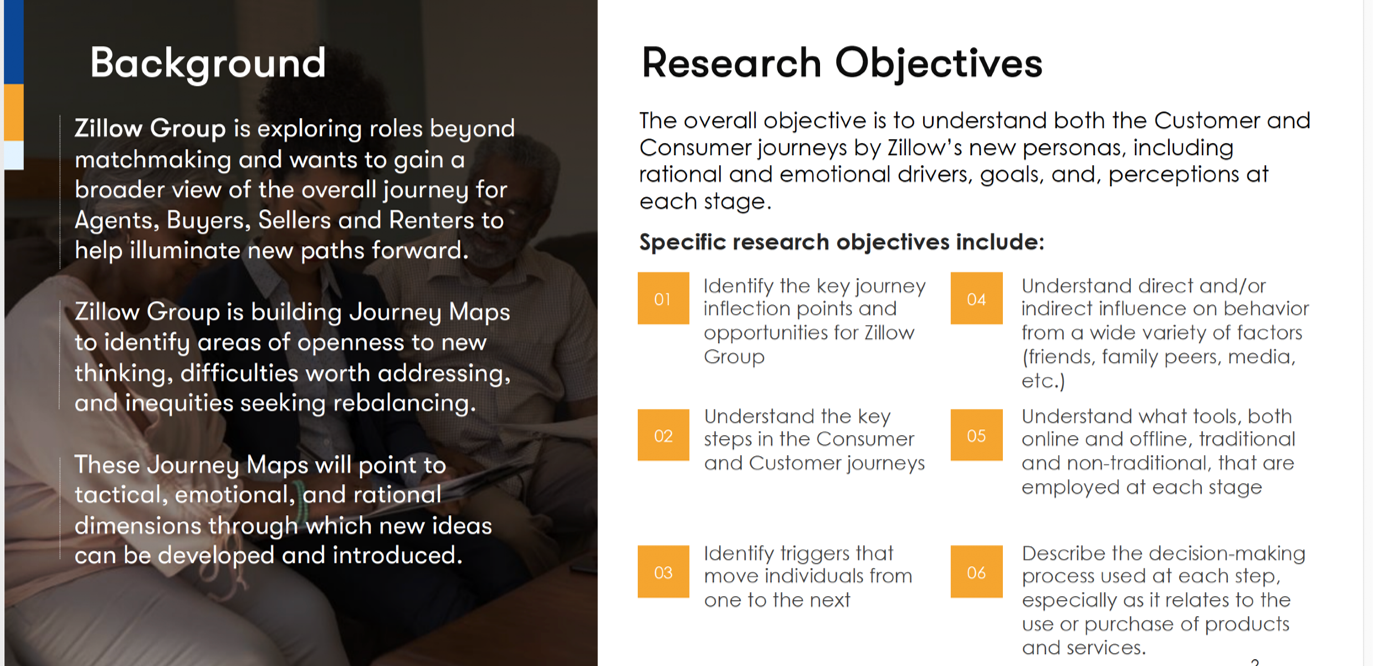

Objectives. Validate business viability, surface hidden friction, and locate ethical trip‑wires.

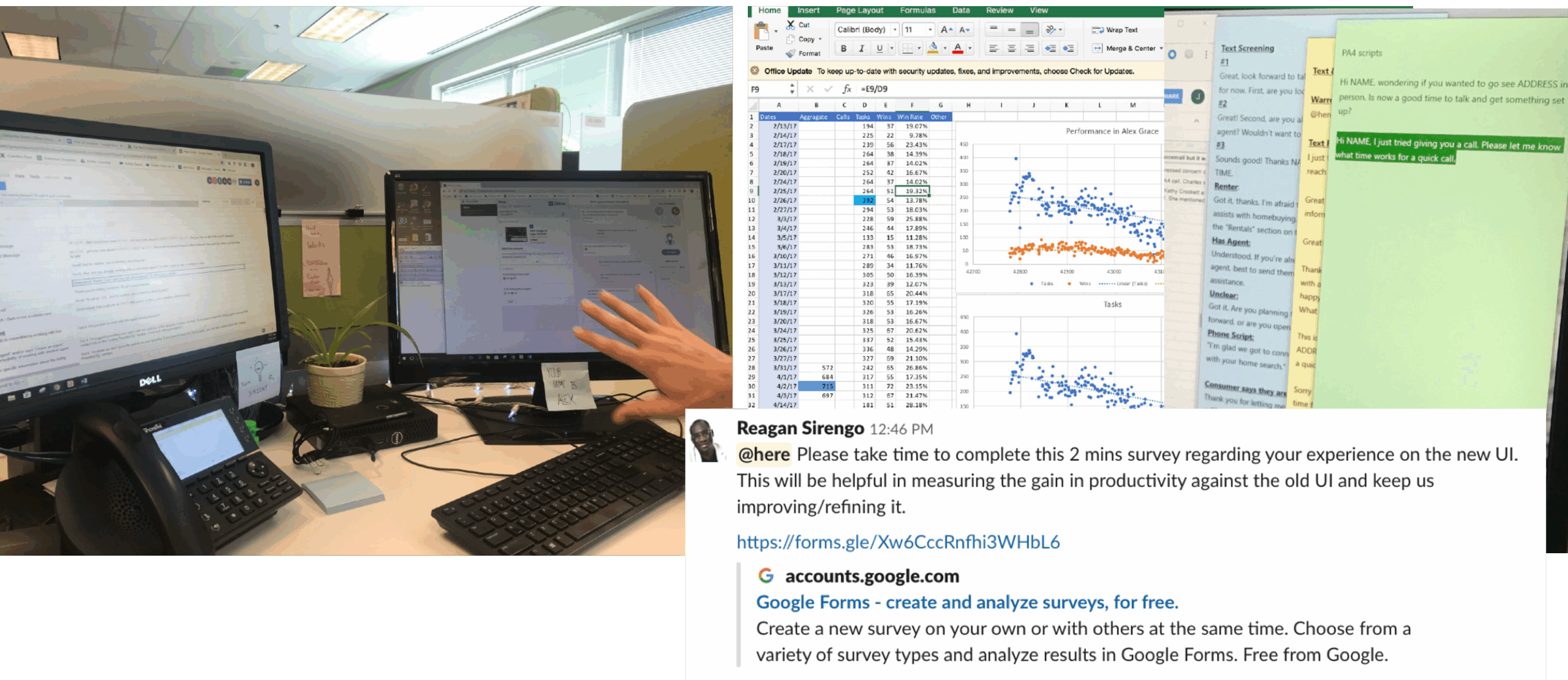

- Contextual inquiry. Shadowed 14 agents across Seattle, Phoenix, and Austin. Mapped ambient noise, secondary screens, and workflow switching.

- Diagnostic analytics. Partnered with Data Engineering to instrument untracked steps; revealed a seven‑second lag in the “Assign Beth” API that multiplied to 45 s of agent silence.

- Ethics workshop. Brought Legal, Policy, and Ops into a two‑hour fish‑bowl to ask, “When could Pearl hurt trust?” Outcome: a red‑flag list (e.g., mis‑routing to unlicensed agents, over‑sharing contact data).

Leadership Output. A Problem Playbook—six pages summarizing pain signals, business risk, and user sentiment. Shared in Confluence; referenced 118 times within the first quarter.

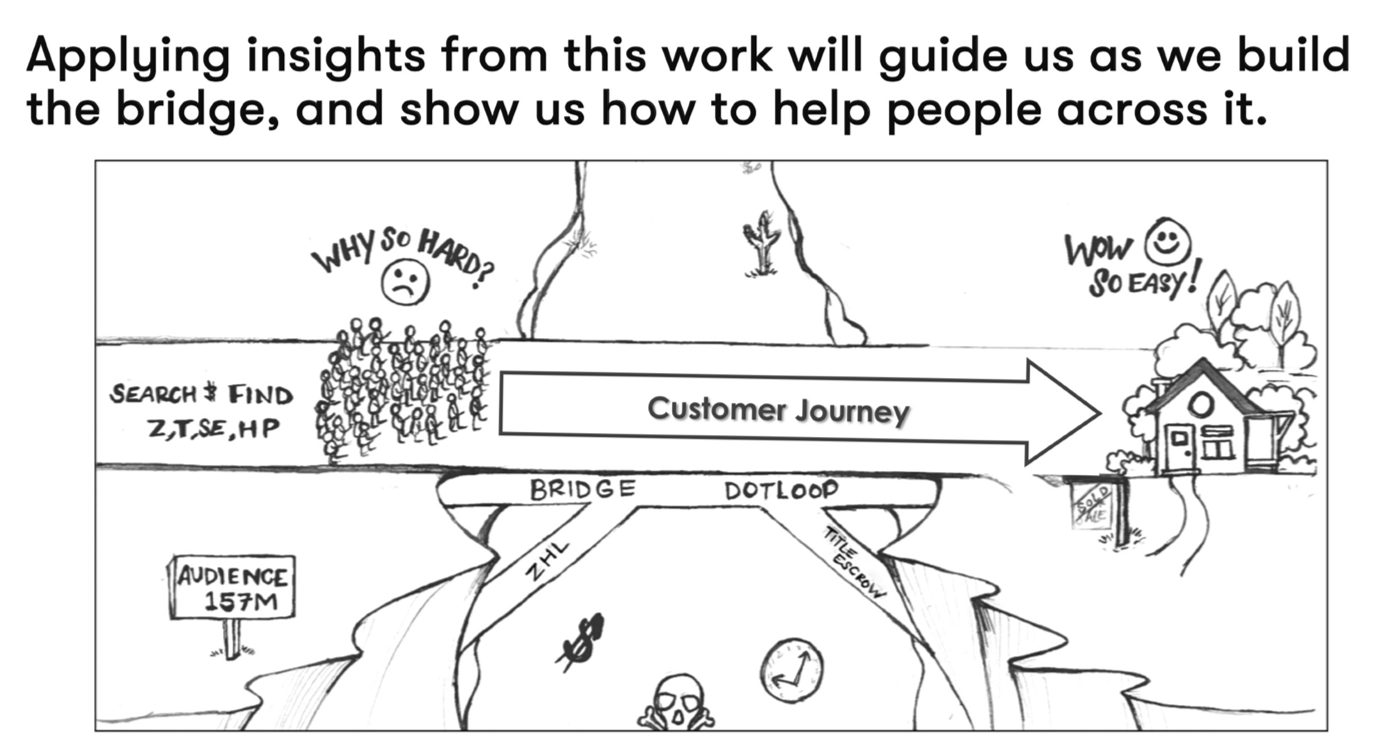

DEFINING THE NORTH STAR

North‑Star Principle: “Invisible AI, Visible Value.”

Let automation erase grunt work so humans can focus on empathy. Measure success in seconds saved, errors avoided, and trust earned.

- Why: Unlock faster, more human equity conversations for 1 million+ shoppers.

- Goals: Cut “agent-connect” time to < 90 seconds; raise session revenue; ship WCAG-AA from day one.

- Leadership Moves: I authored a one-page press-release narrative that framed Pearl as a conversational AI sidekick and tied success to Zillow’s OKRs. I logged model bias and privacy as guardrail risks and set a target NPS of greater than 55.

- Key Output:North-Star narrative & metric dashboard, Insights & opportunities, business and user goals, success metrics.

PRODUCT ENTRY POINT

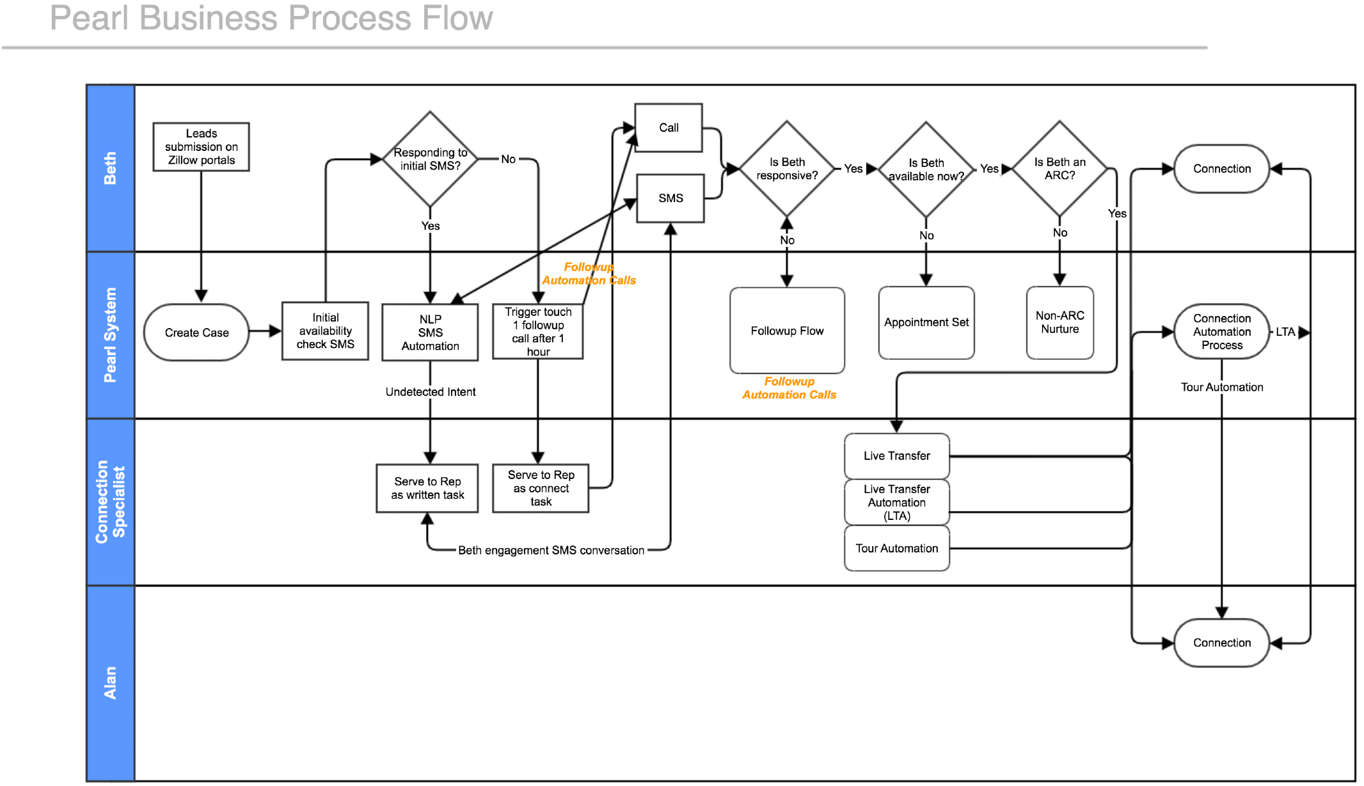

2. DISCOVER PHASE: DEFINING SUCCESS WITHOUT PRESCRIBING FEATURES

- Why: Prove the problem is real and pinpoint friction.

- Goals: Uncover blockers in the existing “Beth ↔ Alan” lead-routing flow.

LEADERSHIP MOVES

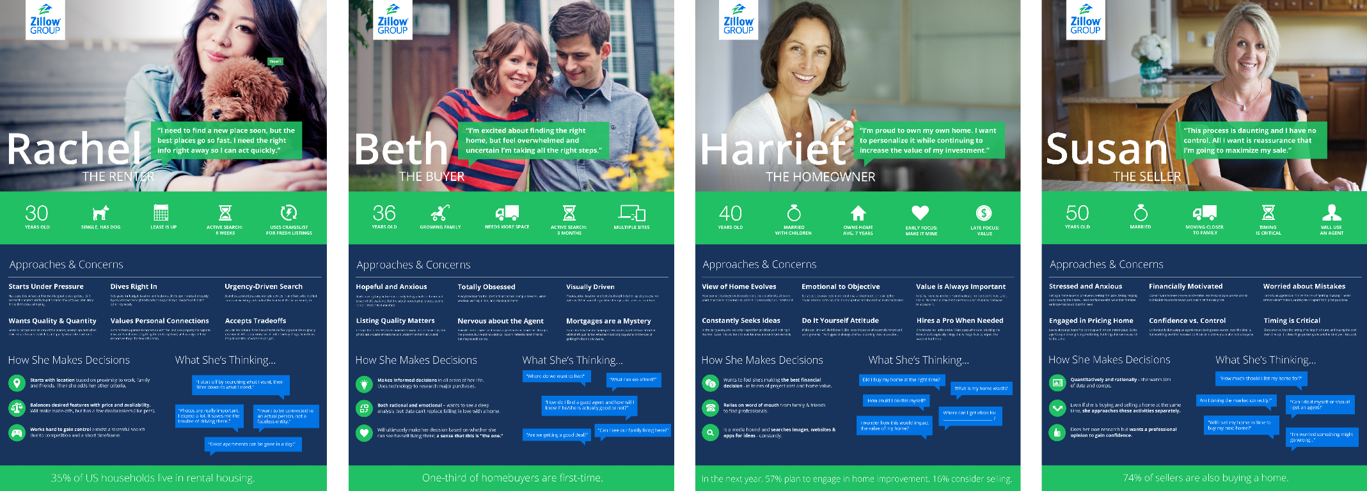

- Drafted two primary personas (time‑starved agents and KPI‑driven sales managers).

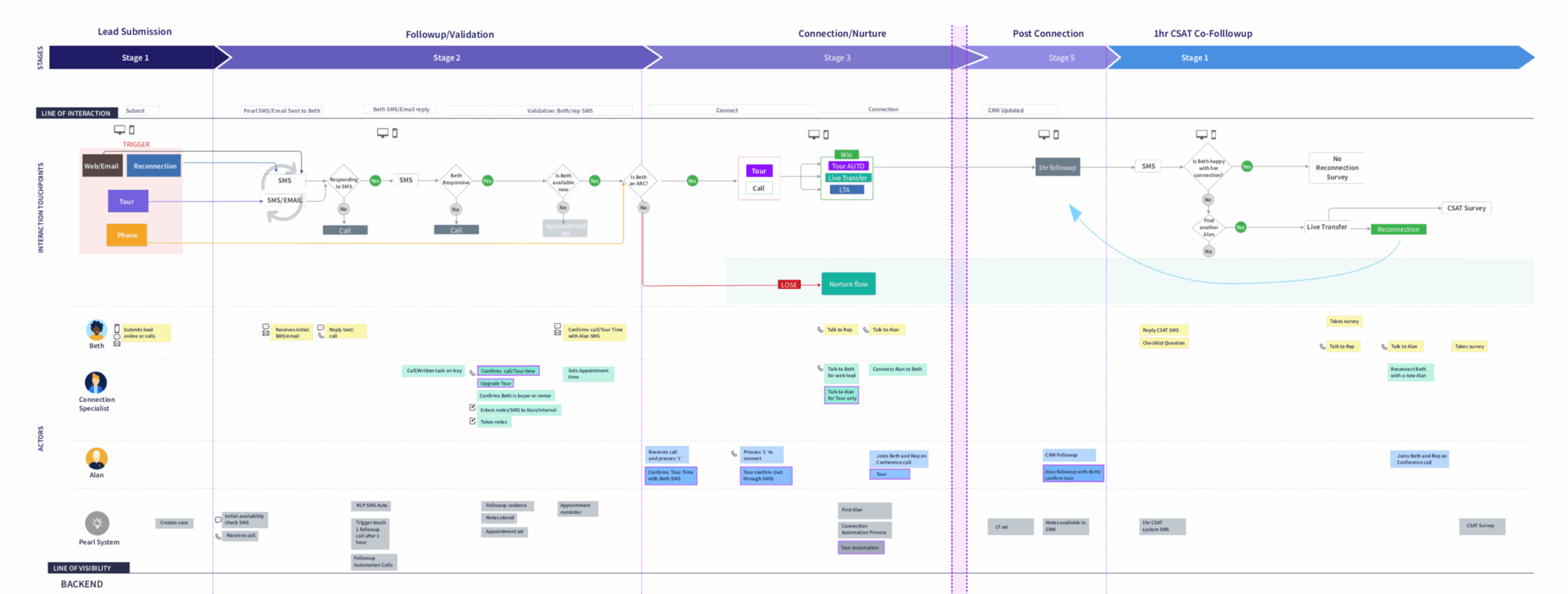

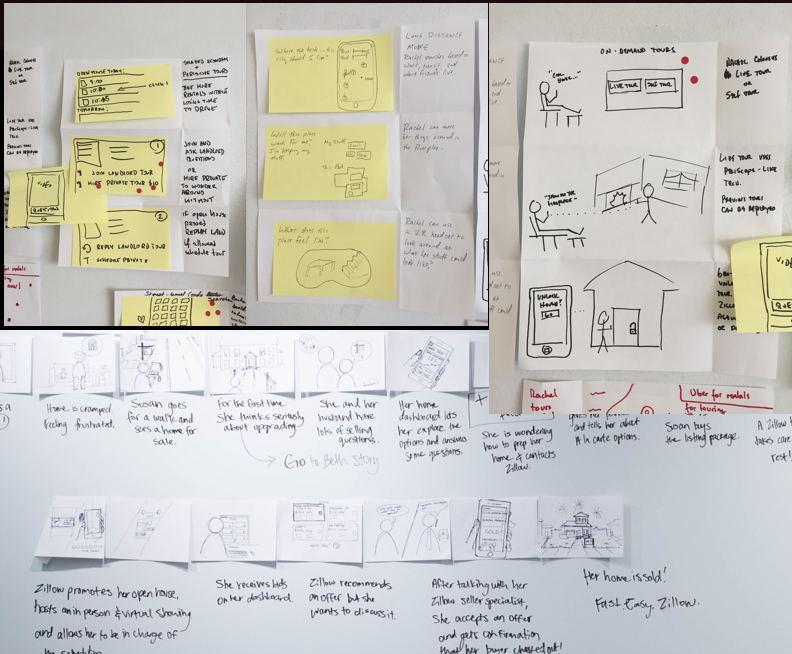

- Mapped the fourteen‑step journey from shopper click to agent call, annotating latency, liability, and delight moments.

- Locked KPIs into quarterly business goals so design outcomes would matter to Finance and Operations.

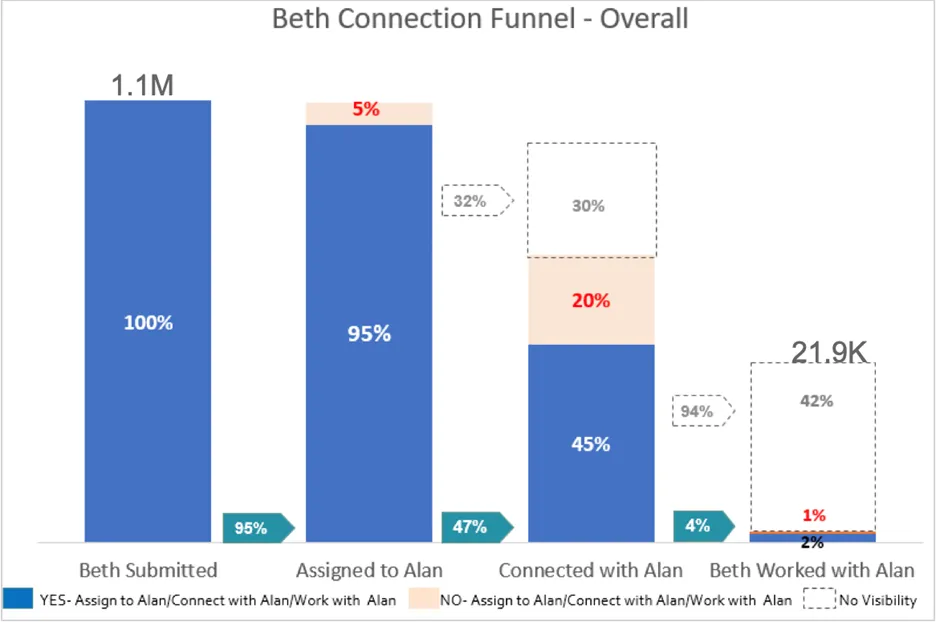

- I ran 30 in-depth agent interviews, a 1.1 M-record data audit, and a follow-up survey of incomplete sign-ups. Findings: only 2 % of shoppers (“Beths”) ever formed a confirmed relationship with their matched agent (“Alan”)—with visibility gaps in 32 % of cases.

- Key Outputs: JTBD map, persona, competitive analysis, user and stakeholders interview, opportunity brief, risk heat-map.

PERSONAS

This persona work was a result of numerous user interviews, which led us to find our target users who are savvy despite a lot of constraints and doesn’t have time for messy content.

- Alan, self‑employed agent, phone glued to ear, compensated on closure; time = money.

- Chanel, sales‑floor manager, juggles team KPIs and compliance audits.

RESEARCH

Like any good renovation, we knew we had to start by laying the groundwork. We ran four user research studies and audited the existing UI.

- Drafted FOUR primary personas (time‑starved agents and KPI‑driven sales managers).

- Mapped the fourteen‑step journey from shopper click to agent call, annotating latency, liability, and delight moments.

- Locked KPIs into quarterly business goals so design outcomes would matter to Finance and Operations.

APPROACHES AND TOOLS

I traveled to various offices to observe, talk to and document how people use the tool, opportunities and the unmet goals. I wanted a way for product teams to easily refer to this research findings during the redesign.

RECOMMENDATIONS

RECOMMENDATIONS

- Design designated areas for specific notifications and alerts in convenient and helpful modules. This wouldallow agents to multitask better and find things easier.

- Redesign workflows to decrease the amount of clicks it took to perform certain tasks.

- The color schemes need to make it more comfortable to stare and read text on a screen for long periods of time. Work on accessibility

- Design withunderstanding their customer journeys across various channels and contexts and support them through Ul design

JOURNEY MAPING: FIGURING OUT THE PROBLEM

To understand the problem, I interviewed a few users to gain insight. Once I had a hunch about what might be happening, I followed up with a larger survey of people who had signed up but not completed the setup. I visualised the 14‑step chain from Beth’s “Contact Agent” click to Alan’s first call. Annotated latency, liability, delight moments.

Experience KPIs (negotiated with Finance & Ops).

- Live‑connect time ≤ 90 s (P75).

- Disposition accuracy ≥ 96 %.

- Customer Effort Score ≥ 6 / 7.

Leadership Touch. Ran a “Lightning Critique” with VP Product: we scored each step for Value, Confidence, and Effort on sticky notes, exposing feature bloat and data gaps.

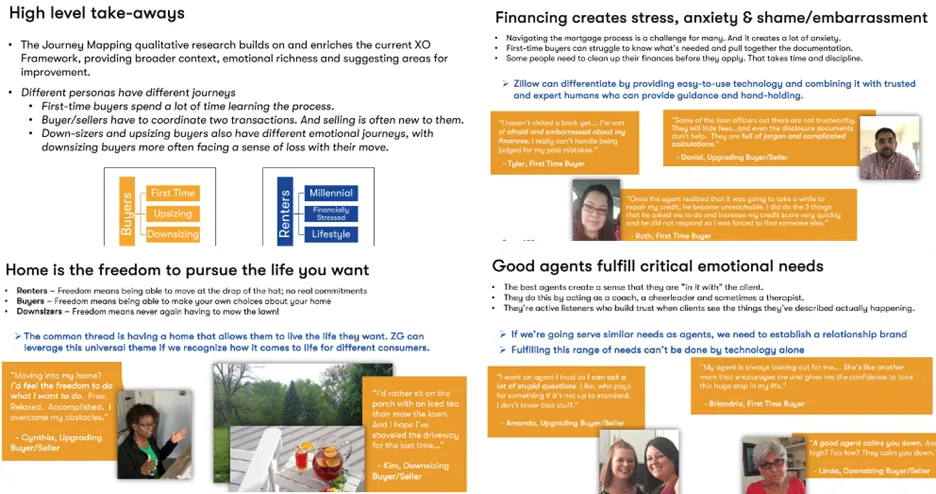

INITIAL FINDINGS

- Overall, 2% of Beths who submitted a contact have a confirmed working relationship with Alan.

- Of the 1.1M Beths who submitted, 21.9K (2%) have a confirmed relationship with Alan

- Limited Visibility is a challenge. We currently cannot tell whether or not

- [Connected with Alan] in 32% of the cases where Beths assigned to Alans

- [Beth is working with Alan] in 94% of cases where Beth was connected with Alan.

INITIAL GOAL

The criteria for a good experience outcome

- Memorable: Resonates with both the user and the product team

- Evidence-based: Rooted in customer insights

- Measurable: Provides a basis for measuring success

- End-in-itself: Describes a problem-to-be-solved/job-to-be-done; never refers to a solution or feature

- Scoped: Describes the smallest meaningful and actionable unit of customer value; Has one verb at its core

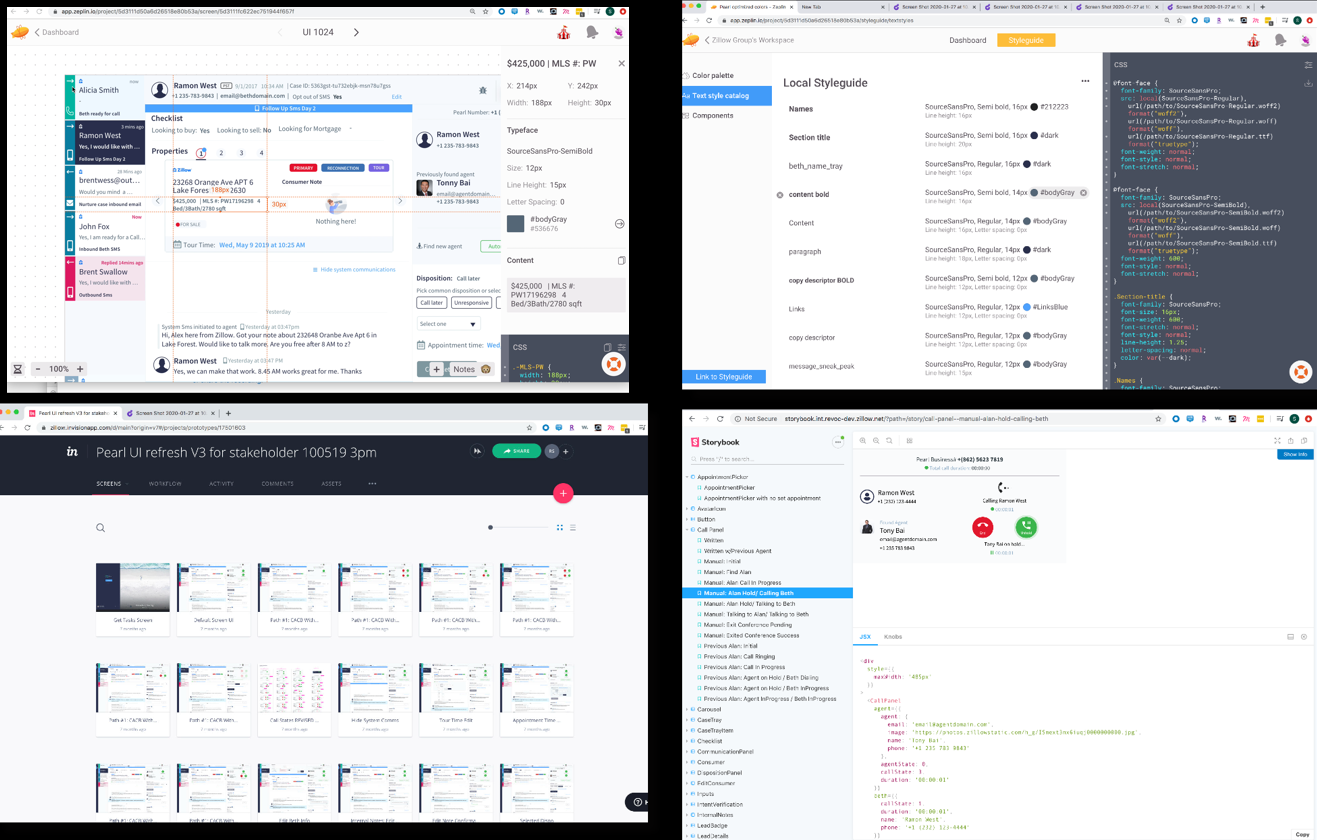

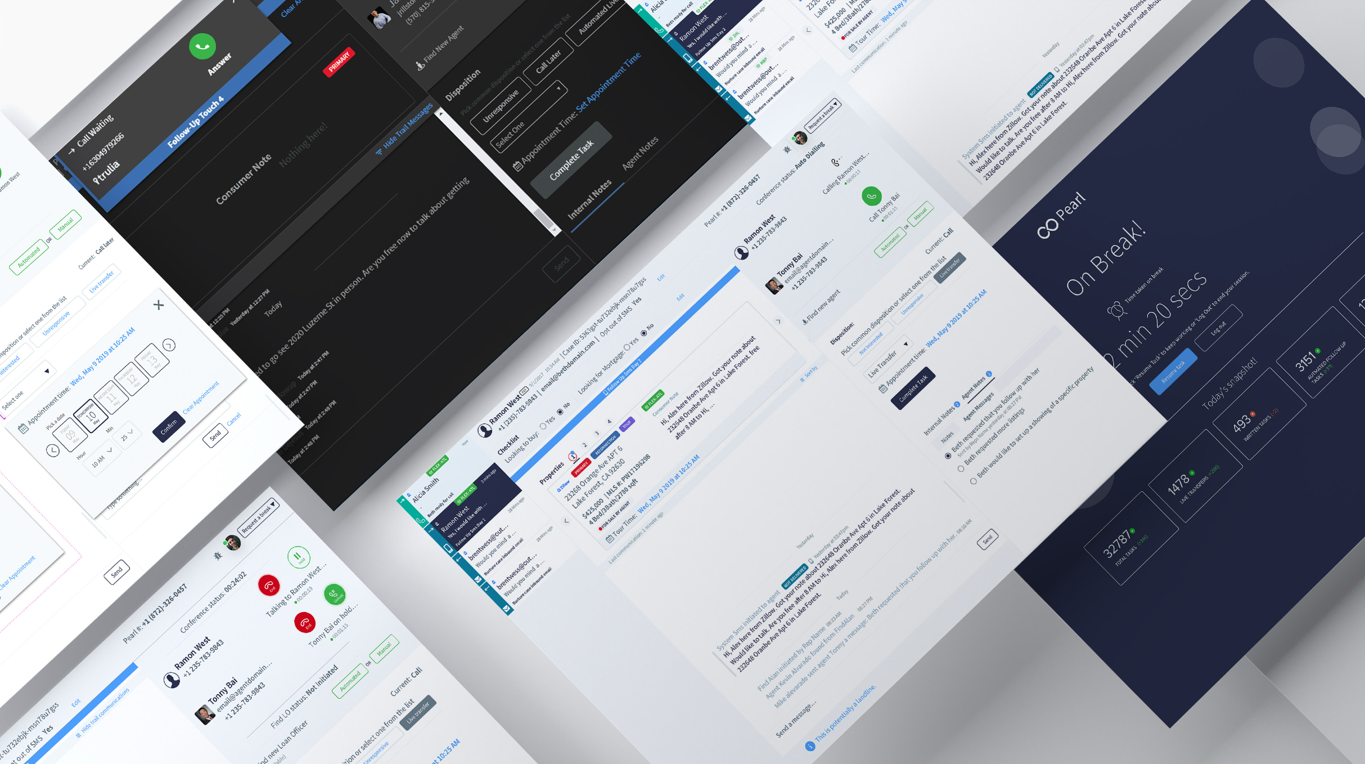

3. DESIGN PHASE: CO-CREATE CONCEPTS

WHY:Ship an MMP that proves AI can be both fast and transparent.

- Generate bold options, pick the strongest.

- Derisk fast; validate desirability, feasibility, and viability.

- Secure commitment and ownership.

- Ship an accessible, performant product without slowing velocity

HOW:Paired with engineers to wire axe‑core into CI; released to 20 % of users across regions for A/B comparison

OUR TARGETS

- Short-list flows that cut clicks and surface live context.

- Hit ≤ 90 sec connect time in pilot; maintain full keyboard access.

- Green-light MVP; assign DRIs for backend refactor, AI tuning, and WCAG audits.

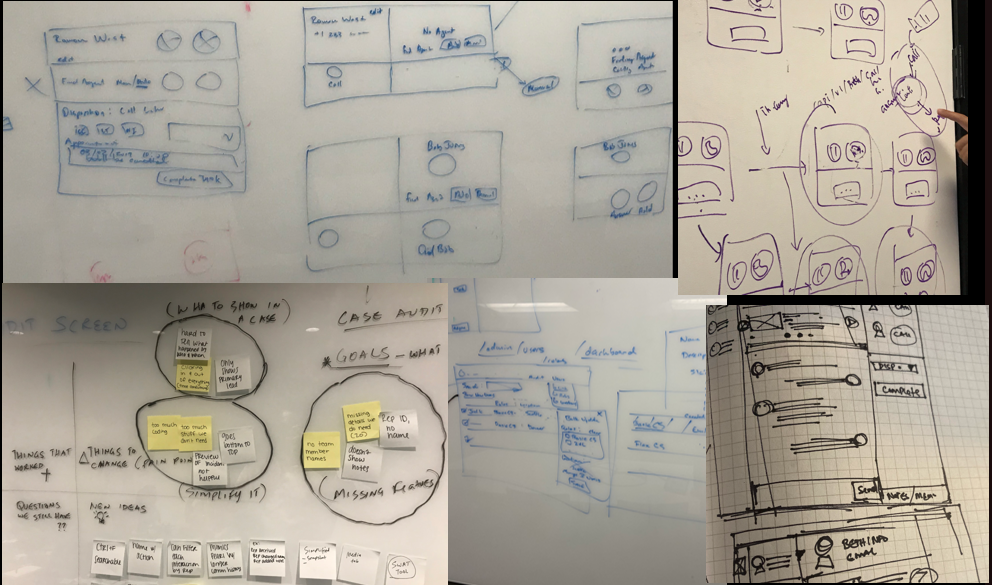

LEADERSHIP MOVES via rapid iteration:

- Sketch‑jams. Mixed tables of engineers, designers, and ops; forced divergent ideas in 45‑minute sprints.

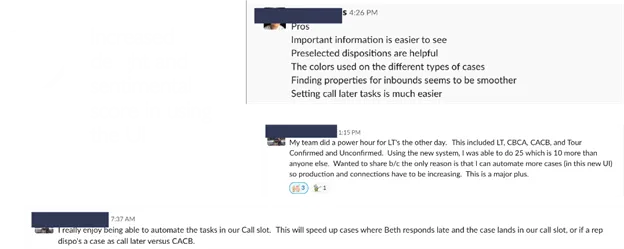

- Ran inclusive sketch‑jams; produced AI‑assisted quick‑reply chips and auto‑filled data cards.

- Crazy‑8s on copy. Copywriters and PMs rewrote system messages eight ways to test tone and clarity.

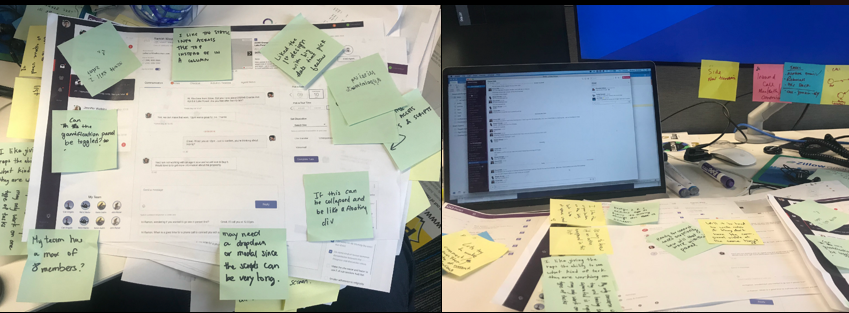

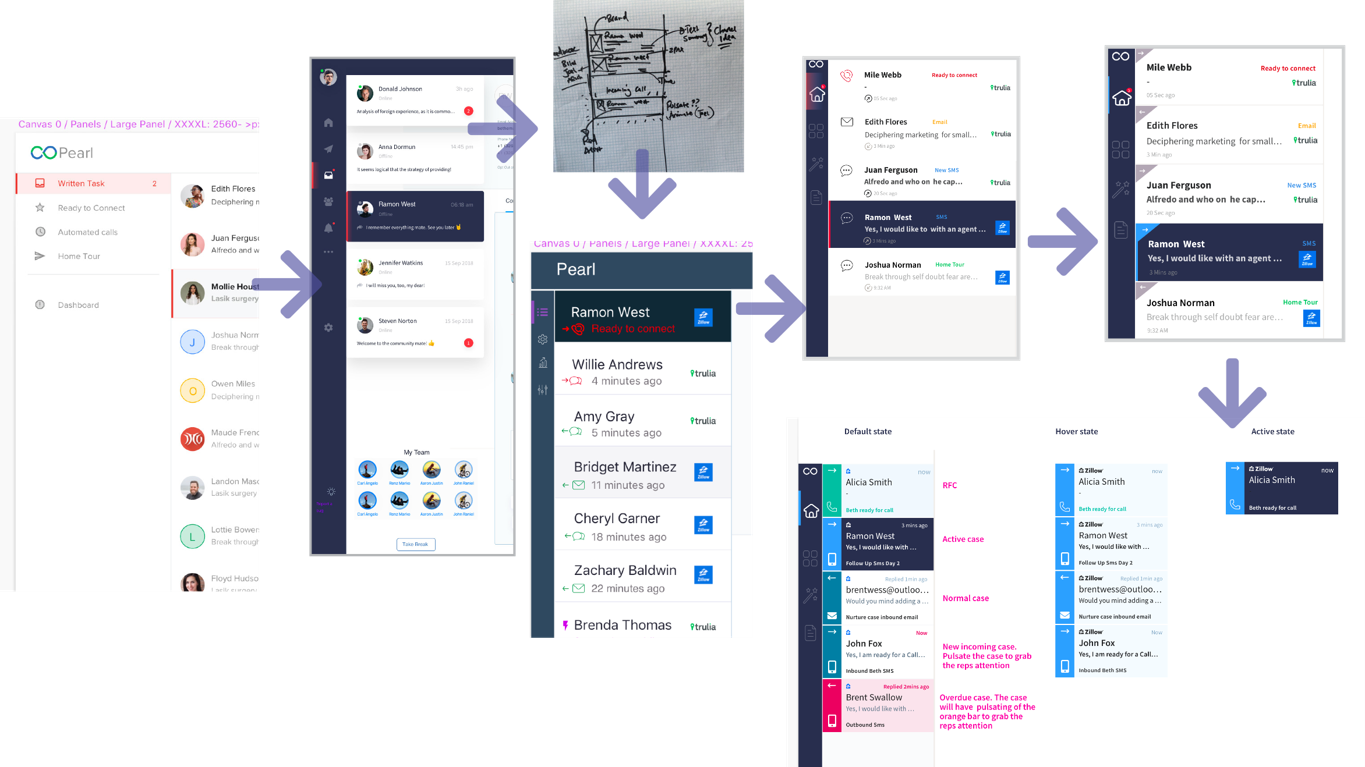

- Built a mobile‑optimized InVision prototype in five days; moderated tests on UserTesting.com.A five-day sprint produced an interactive prototype; pilots with power users surfaced color-contrast tweaks and shortcut refinements.

- Introduced a token‑based design system so colours, spacing, and states remained consistent across products.

- Rapid storyboards highlighted AI pre-fill and hot-key dispositions to erase copy-paste toil.

- Leadership Artefact. Published a “Decision Diary”—why we killed or shipped each concept. Reduced “why” meetings by 40 %

Key Outputs: IA, user flows, screen flows, concept design/sketches, wireframes/mocks, clickable/interactive wireframes, usability research, design taken system, detailed UI, pivot-or-proceed decision, decision log, roadmap v1.0, and accessibility test plan. Hot‑key flows cut task time by 38%; core audits passed 100% contrast and keyboard‑nav checks on the first run.

INSIGHT-DRIVEN INTERACTIONS

- Replaced seven‑click disposition with a “One‑Tap Top 3” surfaced by AI ranking → cut error rate by 37 %.

- Introduced Keyboard “.” (dot) Quick‑Nav: press “.” anywhere to cycle focus through action buttons → average task time −18 %.

- Switched to tokenised high‑contrast palette → 100% WCAG AA compliance, agent eye‑strain complaints down per support tickets.

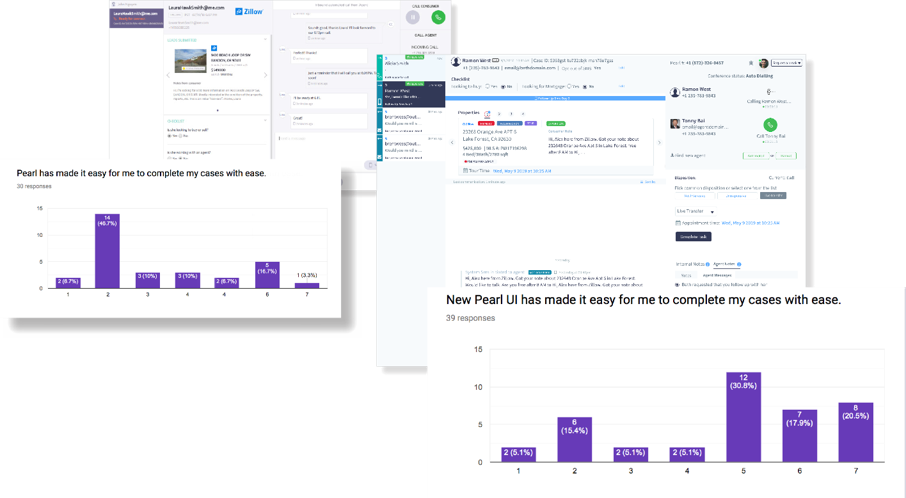

- Customer Effort Score jumped from 3.5 / 7 to 6.5 / 7.

- The disposition error rate fell from 5.30% to 3.33

- Average live‑connect time dropped below the 90‑second goal.

SIMPLIFY THE FLOW

While I designed states for every piece of the flow in the next page, collaboration was essential. These seemingly simple iterations solved the problems of the original flow, but like most “simple” design, it took a lot of complexity to get there.To increase productivity, I proposed a change in the flow, which we explored how AI can help in the conversation to pre-fill commonly asked info to avoid copy and paste. Quick wins

PROPOSED NEW FLOW

Goals: Hit ≤ 90 sec connect time in pilot; maintain full keyboard access.

FIXING THE PROBLEM

I collaborated with the team via design thinking workshops and held several design crits with the larger design family from the company on the design. Here’s some of the output of that collaboration.

The discussions with friendly and passionate colleagues were among the best parts of working on this project. We were able to deep-dive into the meaty questions, the details, and the far-flung ideas about what could be possible. As a designer, it is incredible to work with developers and product managers who care so much about the design process and have so many great UX ideas.

SKETCHING AND WHITEBOARD SESSIONS

PEARL WIREFRAMES WALKTHROUGH

DELIVER AND VALIDATE: SHIPPING WITHOUT SPOOKING THE FLEET

SUMMARY

I collaborated with the team via design thinking workshops and held several design crits with the larger design family from the company on the design. Here’s some of the output of that collaboration.

- Paired with back‑end leads to migrate to a GraphQL gateway while legacy APIs kept existing users working.

- Wired the core accessibility scanner into the Jenkins pipeline; any failing component blocked the merge.

- Rolled out to twenty percent of agents behind a test environment with one‑click rollback.

- Held a daily five‑minute “metric pulse” so the whole team watched live results together.

- After four weeks the pilot achieved:

- Median live‑connect time dropped from 180 seconds to 85 seconds.

- Disposition error rate fell from 5.3 percent to 3.33 percent.

- Customer Effort Score climbed from 3.5 to 6.5 out of 7.

- The senior leadership featured Pearl in the quarterly board deck as evidence that design thinking accelerates revenue capture.

Gradual Rollout.

- Canary release to 20 % of agents across five regions; automatic rollback toggle in LaunchDarkly.

- Daily stand‑ups included a 5‑minute “Metric Pulse” where we scanned live dashboards together.

SAMPLE FEATURE AUDIT AND SCORING WITH USERS

SAMPLE DESIGN ITERATIONS WITH USERS

BUILDING THE TOKEN DESIGN SYSTEM

ADDRESSING ACCESSIBILITY AND USABILITY ISSUES

FROM OLD TO NEW

NEW EXPERIENCE TEST ENVIRONMENT

HOW WE ROLLED OUT THE NEW UI

- The back-end development team needed to update its tech stack while maintaining the one that was powering the existing users.

- Front-end development had a head start, as we collaboratively build the front-end, test it with users and iterate before plugging in back-end functionality.

- Released the new UI to 20% of users distributed in different regions and skillsets to learn and improve as we firmed the designs.

KEY TAKEAWAYS: DESIGNING FOR AI

Machines are clearly superior to humans at certain tasks, but before we delegate something to them, we should ask ourselves some questions: Will the machine really be better than a human at this task? Will we benefit from this particular application of automation? What are the ethical implications? Before automating a task, we should consider very carefully the impact on everyone involved and what role we still really want humans to play in our society.

- Speed is a feature—clarity is a necessity. AI can feel magical only when latency is invisible and the user knows who is in control.

- Tokens outlive trends. By investing early in semantic tokens, we future‑proofed accessibility, theming, and brand consistency.

- Ethics is not a checklist but a habit. A quarterly bias audit uncovered unintended exclusion of agents in low‑volume zip codes—catching it early saved a PR nightmare.

- Design + Finance = Power. When UX metrics appear in revenue forecasts, design graduates from “service” to “strategy.”

4. RUN & SCALE PHASE

LAUNCH AND LEARN

WHY: Measure real-world impact, loop insights, sustain growth, safeguard trust, and ensure gains persist and pave the road for future AI feature

HOW:

- Design System Ops. Documented contribution model; first external feature PR merged by another team within six weeks.

- Voice‑of‑Agent Loop. Embedded a one‑click sentiment poll inside Pearl (“How was your workflow today?”), generating 1,200 data points per month

- Rolled out a dynamic UI that hides irrelevant controls (e.g., no “Call” button when sending SMS).

- Continuous Ethics Review. Quarterly bias audit on NLP models; logged false positives and user complaints.

- Outcome. System accuracy rose, agent delight scores improved, and the updated design library became the template for three additional Zillow internal tools.

GOALS: Raise Customer Effort Score from 3.5 → 6.5 / 7; cut disposition errors < 4 %.

OUTCOMES –HOW WE MOVED THE NEEDLE:

- Adaptable screens to improve web accessibility to create consistency.

- Dynamic screens- only show features for a specific task i.e. don’t show call buttons when we are sending SMS

- One click approach for frequently used dispositions

- Content strategy: We reduced the amount of content overall, making sure that users knew where to focus.

- Improved accessibility and introduce keyboard shortcuts

- Improved dashboard and case audit tools for better reporting.

- Introduce an inbuilt User score tool to capture quick feedback for Pearl improvements

Leadership Moves: Staged rollout behind feature flags, A/B tests on hot-key adoption and AI tag accuracy. Results: CES jumped to 6.5/7; error rate fell to 3.33 % (-37 %). Added user-score micro-survey for continuous feedback,

BUSINESS IMPACT:

- Lead‑to‑agent confirmations ↑ 44 %.

- Support tickets about Pearl UI ↓ 32 %.

- Design tokens now underpin three sister tools (Loan Officers, Rentals CRM, New‑Homes Concierge).

Key Outputs:Launch report, experiment dashboards, knowledge-base entry, KPI tracker

OVERALL WINS

- 6.5/7 Increase customer Effort score from 3.5/7

- System accuracy. Making the Ul more intuitive and capable so that reps reduce their errors by introducing;

- Top Dispositions with one click

- Automated Find Agent

- Disposition error down trend from 5.30% to 3.33%

| KPI | Pre-Pearl | Post-Pilot | Δ |

| Customer Effort Score | 3.5 / 7 | 6.5 / 7 | +86 % |

| Disposition Error Rate | 5.30 % | 3.33 % | –37 % |

| Confirmed Agent Relationships | 2 % | ↑ 3× (pilot cohort) | +200 % |

- Increased delight and sentimental score in using the Ul

DEPTH OF UX LEADERSHIP

- Vision & Storytelling: Rallying diverse functions behind a launch‑day narrative instead of feature lists.

- Research Craft: Blending ethnography, analytics, surveys, and jobs‑to‑be‑done to surface blind spots in AI suggestions.

- Design‑Ops Infrastructure: Building token libraries, accessibility gates, and governance so craft scales effortlessly.

- Cross‑Functional Influence: Embedding design metrics in finance dashboards and coaching PMs on story mapping.

- Ethical Stewardship: Running bias audits, defining human‑override policies, and displaying NLP confidence levels in the UI.

- Scalable Facilitation: Delivering more than thirty on-site and remote workshops using the lean UX process.

- Product Ownership: Staying engaged through rollout, tracking live telemetry, and publishing candid post‑mortems.

- Data-Informed Storytelling: Every decision—from North-Star framing to pilot telemetry—was tied to a business OKR and a user-centric KPI for execs to lean in.

- Ethics as Velocity, Not Drag:By automating contrast checks and bias reviews, compliance became a sprint ceremony, not a last-minute scramble.

- AI + Accessibility = Scale: Hotkeys, screen-reader labels, and language models together cut task time without cutting anyone out.

- Narrative Reviews Trump Slide Decks:Two-page memos forced clarity, sped decisions, and built cross-functional trust.

- Accessibility evangelist: WCAG-AA baked in from sprint 0; keyboard shortcuts, contrast tokens, and screen-reader labels treated as first-class features.

- Culture catalyst: By coaching pods in narrative reviews and inclusive design rituals, I boosted velocity, consistency, and cross-team trust.

IMPACT AT GLANCE

| Metric | Before | After |

| Agent connect time | ~3 min | < 90 sec |

| Confirmed shopper-agent relationships | 2 % | +3× uplift (pilot cohort) |

| Customer Effort Score | 3.5 / 7 | 6.5 / 7 |

| Disposition error rate | 5.30 % | 3.33 % |

| Accessibility compliance | Ad-hoc | 100 % automated AA pass |

KEY REFLECTIONS: A 90-Second Promise Fulfilled

Today Pearl handles millions of real-time homeowner conversations, proving that when AI is wrapped in thoughtful design and ethical guardrails, it doesn’t just move metrics—it moves lives. Guiding Pearl from a brittle prototype to a high‑speed, high‑trust backbone demanded more than beautiful screens. It required orchestrating insights, ethics, engineering, and culture. By championing an evidence‑driven, accessibility‑first mindset, I proved that deep UX leadership can derisk AI, delight users, and unlock measurable business value—all without losing the human touch that makes home‑buying meaningful.That’s the bar I bring to every product I lead: ambitious, data-grounded, human-centered, and ready for big-tech scale.

RECOMMENDATIONS

RECOMMENDATIONS